Source: Quanta, Apr 2022

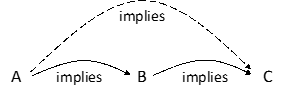

The Kahn-Kalai conjecture is very broad — it’s written in the abstract language of sets and their elements — but it can be understood by considering a simple case. First, imagine a graph: a set of points, or vertices, connected by lines, or edges. To make a random graph, take a biased coin — one that lands on heads with a probability of 1%, or 30%, or any other percentage between zero and 100 — and flip it once for a given pair of vertices.

If the coin lands on heads, connect those vertices with an edge; if the coin lands on tails, don’t. Repeat this process for every possible pair of vertices.

Mathematicians want to know when such a graph is likely to have some sort of interesting structure. Perhaps it will contain a triangle. Or maybe it will have a Hamiltonian cycle, a chain of edges that passes through every vertex exactly once. It’s possible to think about any property, so long as it is “increasing” — that is, if adding more edges to a graph that already contains the property will not destroy the property.

If the probability of the coin turning up heads is low, edges will be rare, and properties like Hamiltonian cycles are not likely to arise.

But if you dial up the probability, something strange happens. Each property has what’s called a threshold: a probability at which the structure emerges, often very abruptly.

Just as ice crystals form when the temperature dips below zero degrees Celsius, the emergence of a particular property suddenly becomes extremely likely as more edges get added to the graph. When edges are added to a random graph of N vertices with a probability of less than log(N)/N, for instance, the graph is unlikely to contain a Hamiltonian cycle. But when that probability is adjusted to be just a hair greater than log(N)/N, a Hamiltonian cycle becomes extremely likely.

Mathematicians want to determine such thresholds for various properties of interest. “Thresholds are maybe the most basic thing you’d try to understand,” Fox said. “I look at a random object; does it have the property that I’m interested in?” Yet while the threshold has been calculated for Hamiltonian cycles and some other specific structures, in most cases it remains very difficult to determine a precise threshold, or even a good estimate of one.

So mathematicians often rely on an easier computation, one that provides a minimum possible value, or lower bound, for the threshold. This “expectation threshold” is calculated by essentially taking a weighted average. “The nice thing about this expectation threshold is it’s very easy to calculate,” said David Conlon, a mathematician at the California Institute of Technology. “Generally speaking, you can calculate this expectation threshold in like two lines for almost anything.”

In 2006, Kahn and Kalai posited that this was actually the worst-case scenario. Their eponymous conjecture states that the gap between the expectation threshold and the true threshold will never be greater than a logarithmic factor. The conjecture, according to Conlon, “essentially takes what is the central question in random graphs and gives a general answer for it.”

But that’s just a simple case. The conjecture pertains to far more than random graphs. If true, it holds for random sequences of numbers, for generalizations of graphs called hypergraphs, and for even broader types of systems. That’s because Kahn and Kalai wrote their statement in terms of abstract sets.

Random graphs constitute one specific case — a random graph can be thought of as a random subset of the set of all possible edges — but there are many other objects that fall within the conjecture’s purview. “Weirdly, when you’re dealing with graphs, proving it in that context would be very hard,” Conlon said. “But somehow, jumping to this abstract setting reveals the navel of the thing.”

It was this generality that made the statement seem so unbelievable. “It was a very brave conjecture,” said Shachar Lovett, a theoretical computer scientist at the University of California, San Diego.

For one thing, it would instantaneously streamline a huge effort in combinatorics — trying to calculate thresholds for different properties. “Questions where seemingly the proofs needed to be very long and complicated suddenly just disappear,” said Alan Frieze, a mathematician at Carnegie Mellon University. “The proofs became just trivial applications of this [conjecture].”

The Sunflower Path

The methods that would eventually lead to the new proof of the Kahn-Kalai conjecture began with a breakthrough on a seemingly unrelated problem. In many ways, the story starts with the sunflower conjecture, a question posed by the mathematicians Paul Erdős and Richard Rado in 1960. The sunflower conjecture considers whether collections of sets can be constructed in ways that resemble the petals of a sunflower.

In 2019, Lovett was part of a team that came very close to a full solution of the sunflower problem. At the time, the work seemed completely separate from the Kahn-Kalai conjecture, which involves considerations of probability. “I didn’t see any connection with our conjecture,” said Kalai. Neither did Lovett, who said that “we weren’t aware of these [other] questions. We cared about sunflowers.”

Park and Pham rewrote the Kahn-Kalai conjecture in a way that let them make use of covers.

The original conjecture puts constraints on what the probability of a weighted coin landing on heads should be in order to guarantee that a random graph or set contains some property.

In particular, it says that the probability has to be at least the expectation threshold for the property multiplied by a logarithmic factor. Park and Pham turned this around: If such a property is not likely to emerge, then the probability assigned to the weighted coin is lower than the expectation threshold multiplied by a logarithmic factor.

That’s where covers come in: When a small cover can be constructed for a subset of structures (like a collection of Hamiltonian cycles), it means that the subset’s contribution to the expectation threshold is small. (Remember that the expectation threshold is calculated by taking a kind of weighted average over all possible structures of a given type.)

So what Park and Pham now needed to show was that if a random set is unlikely to contain one target structure, there must exist a small cover for all such target structures. The bulk of their proof was dedicated to constructing that small cover.

They did this by using a similar piece-by-piece sampling process to the one used in the previous results, while also introducing what Fox called a “very clever counting argument.” One week after their sleepless night in March, they posted their elegant six-page paper online.

“Their proof is super simple. They take the basic idea we developed and [the ideas from] these other papers and add a twist to it,” Lovett said. “And with this new twist, everything somehow becomes much, much easier.”

Frieze agreed. “I cannot explain it, but amazingly it’s true,” he said.

Just like the fractional result, the Kahn-Kalai conjecture, now proved true, automatically implies a cornucopia of related conjectures. But more than that, “this is a powerful proof technique [that] will probably lead to new things,” said Noga Alon, a mathematician at Princeton University. “They had to do it in the right way.”

Park and Pham have now started to apply their method to other problems. They’re particularly interested in getting a more precise understanding of the gap between the expectation threshold and the real threshold.

By proving the Kahn-Kalai conjecture, they’ve shown that this gap is at most a logarithmic factor — but sometimes the gap is smaller, or even nonexistent. At the moment, there’s no broader mechanism for classifying when each of these scenarios might be true; mathematicians have to work it out case by case.

Now, “we think that with this efficient technique we have, we can hopefully be much more precise in pinning down these thresholds,” Pham said.